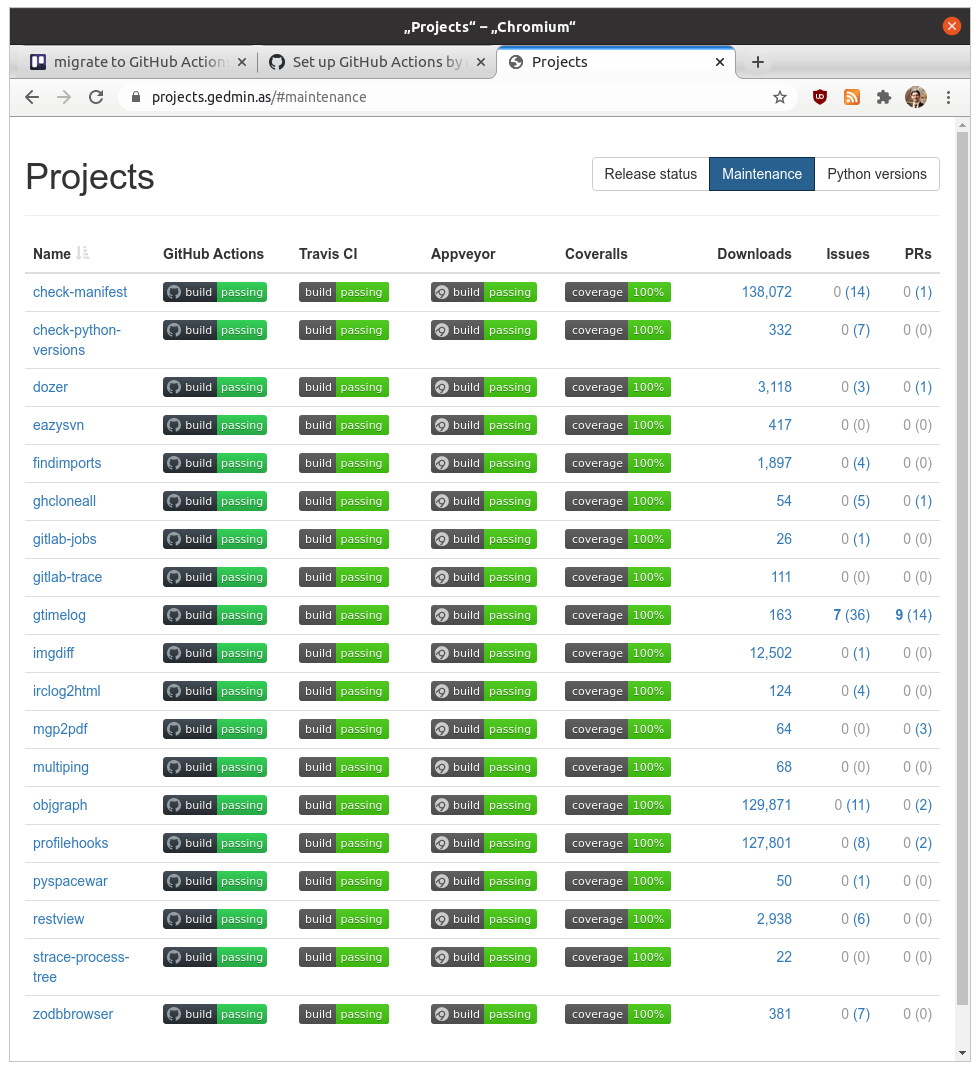

I am grateful to Travis CI for providing many years of free CI service to all of my FOSS projects. However the free lunch is over and I don’t want to constantly ask for free build credits by email (the first 10,000 ran out in 10 days).

I’ve chosen to migrate to GitHub Actions. There are already helpful resources about this:

- Python in GitHub Actions by Hynek Schlawack

- The GitHub workflow template used by Zope Foundation projects

- The official documentation

In fact part of the difficulty with the migration is that there’s too much documentation available! And all the examples do things slightly differently.

So here’s a bunch of tips and discoveries from converting over 20 repositories:

-

The workflow is named

buildbecause the name of the workflow is used in the status badge, and I want my status badges to saylike they used to.

name: build -

It’s placed in a file named

.github/workflows/build.yml(which doesn’t really matter, but I had to pick some name, so I decided to be consistent). -

I still ambivalent to all the examples restricting builds to one branch. I think I like that I’m not getting double builds (branch vs PR) that I used to get with Travis/Appveyor, especially since every matrix row (“Python 3.8” etc.) shows up as a separate check in the UI.

-

I’m testing multiple Python versions, and I’m putting the Python version in the pip cache key, because without it I still saw too many “building wheel …” messages during

pip install.- name: Pip cache uses: actions/cache@v2 with: path: ~/.cache/pip key: ${{ runner.os }}-pip-${{ matrix.python-version }}-${{ hashFiles('tox.ini', 'setup.py') }} restore-keys: | ${{ runner.os }}-pip-${{ matrix.python-version }}- ${{ runner.os }}-pip- -

I’m still using Coveralls, but not through the official action (which doesn’t support Python, what). Note that the coveralls package on PyPI gained GitHub Actions support only in version 2.1.0, which no longer supports Python 2, so I’m skipping Coveralls uploads for my 2.7 and pypy2 builds.

- name: Report to coveralls run: coveralls if: "matrix.python-version != '2.7' && matrix.python-version != 'pypy2'" env: GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }} COVERALLS_PARALLEL: true -

Note that in the GitHub Actions expression language

'2.7'is a string, and2.7is a float, so you must quote Python versions in the strategy matrix or the aboveifcondition will not skip 2.7!strategy: matrix: python-version: - "2.7" - "3.6" - "3.7" - "3.8" - "3.9" - "pypy2" - "pypy3"This is a good idea anyway, because Python 3.10 is coming, and in YAML

3.10is just a spelling of the floating point number3.1with an unnecessary zero. -

I want linters (flake8, mypy, isort, check-manifest, check-python-versions) to have their own matrix rows, and I don’t want to vary Python versions for these. The easiest solution turned out to be defining two jobs with their own matrices, and to duplicate most of the build steps.

jobs: build: name: ${{ matrix.python-version }} strategy: matrix: python-version: - "3.6" ... steps: ... - name: Run tests run: tox -e py lint: name: ${{ matrix.toxenv }} strategy: matrix: toxenv: - flake8 ... steps: ... - name: Run ${{ matrix.toxenv }} run: tox -e ${{ matrix.toxenv }}And yes, I’m putting

${{ matrix.toxenv }}into the pip cache key for these:- name: Pip cache uses: actions/cache@v2 with: path: ~/.cache/pip key: ${{ runner.os }}-pip-${{ matrix.toxenv }}-${{ hashFiles('tox.ini') }} restore-keys: | ${{ runner.os }}-pip-${{ matrix.toxenv }}- ${{ runner.os }}-pip- -

tox-gh-actions doesn’t work the way tox-travis does and runs nothing at all if you don’t add custom configuration to a tox.ini.

-

The workflow files are very verbose, compared to those of Travis CI!

-

The checks UI is flaky, misleading, and not up to GitHub’s usual standards.

If you’re curious, you can look at my workflow file for findimports (very simple test suite, no linters) or workflow file for project-summary (tox, two matrices), or workflow file for check-manifest (a real 2D matrix of python-version × version control system).